TL;DR: Registries vs. Gateways vs. Runtimes

- MCP Registry: A catalog for tool discovery and governance. Use it to enforce an allowlist for your software supply chain by curating a list of approved tools.

- MCP Gateway: A routing layer for connecting models to tools. Use it for rapid development with non-sensitive data, typically using a single shared service account.

- MCP Runtime: A secure execution platform that hosts tools and executes actions on behalf of authenticated users. Use it for enterprise applications that require per-user authorization (OAuth/OBO), vaulted credentials, and structured audit logs.

- The key risk: Using a gateway without per-user permissions exposes sensitive code to prompt-injection attacks and to failed compliance audits.

- Secure deployments require: Vaulted secrets kept outside the LLM context window and OpenTelemetry-compatible audit logs that tie every action back to a specific user identity.

- The verdict: Choose runtimes like Arcade.dev for secure, multi-user agents acting on production systems; use gateways for simpler, low-risk integrations.

Enterprise engineering leads need to connect models like Claude and ChatGPT to core enterprise tools such as GitHub, GitLab, and Jira. Building that connection securely requires either custom servers or granting language models broad access to proprietary code.

The Model Context Protocol ecosystem has expanded rapidly, but the industry continues to conflate tool discovery, request routing, and secure runtime execution.

Without a clear architectural standard, platform teams deploy fragile, insecure connections that expose proprietary codebases to injection attacks.

This guide establishes a 2026 taxonomy for the ecosystem, separates discovery from execution, and shows why production agentic workloads require Runtime-level infrastructure. Treating a basic gateway like a secure runtime is a common path to failed security and compliance audits.

Why DIY MCP servers fail in production

Most engineering teams start their agentic journey using Anthropic’s reference implementations or open-source Python SDKs. These tools work well for prototyping workflows on a local laptop. But push them into a multi-user production environment, and you hit a steep DIY wall.

Production immediately exposes architectural fractures. Teams encounter severe token leakage risks, complex rate-limiting architectures across applications, and zero enterprise audit trails.

For example, GitHub enforces strict point-based limits for its GraphQL API. Naive AI agents stuck in retry loops will quickly exhaust these quotas, causing quota exhaustion and broken agent workflows.

But the most critical breaking point is authorization. When you deploy a custom server, you must decide how the agent authenticates against backend tools.

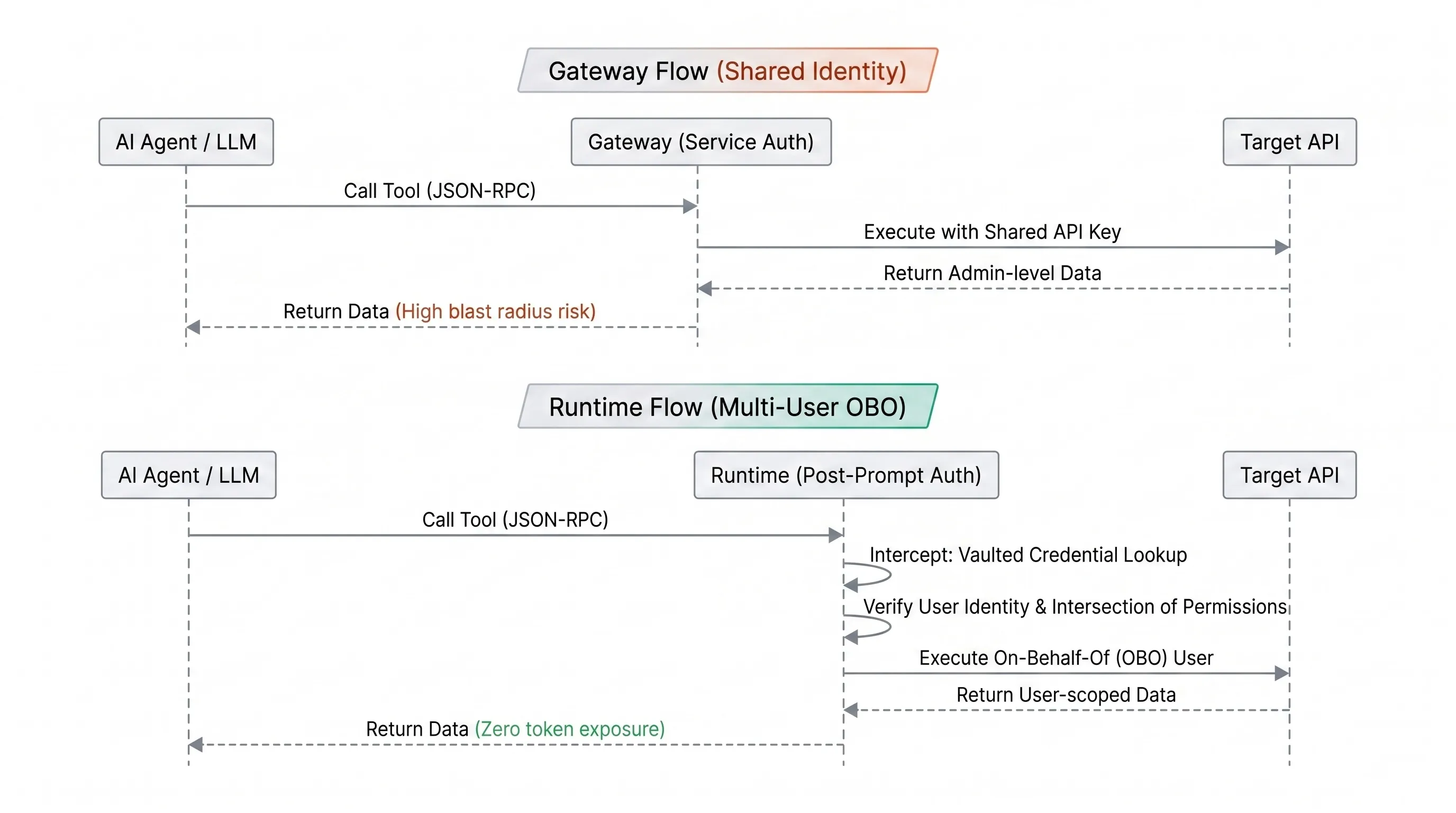

Granting an agent its own generic service account identity is the standard gateway approach. This method bypasses native user permissions. If an agent is compromised via indirect prompt injection, a critical vulnerability detailed in the OWASP Top 10 for Large Language Model Applications, the shared identity creates a wide blast radius. The prompt injection cascades through your source code repositories with unbounded administrative access.

The alternative? Attempting to dynamically inherit full user access on a per-request basis requires stateful authorization flows, refresh logic, and credential vaulting. A stateless proxy evaluates each request in isolation. It can’t track that a request is step 3 of a 6-step workflow, acting on behalf of a specific user who authorized a particular scope minutes ago. That session awareness is what makes per-user, per-tool authorization enforceable, and stateless layers cannot enforce it without significant custom state management.

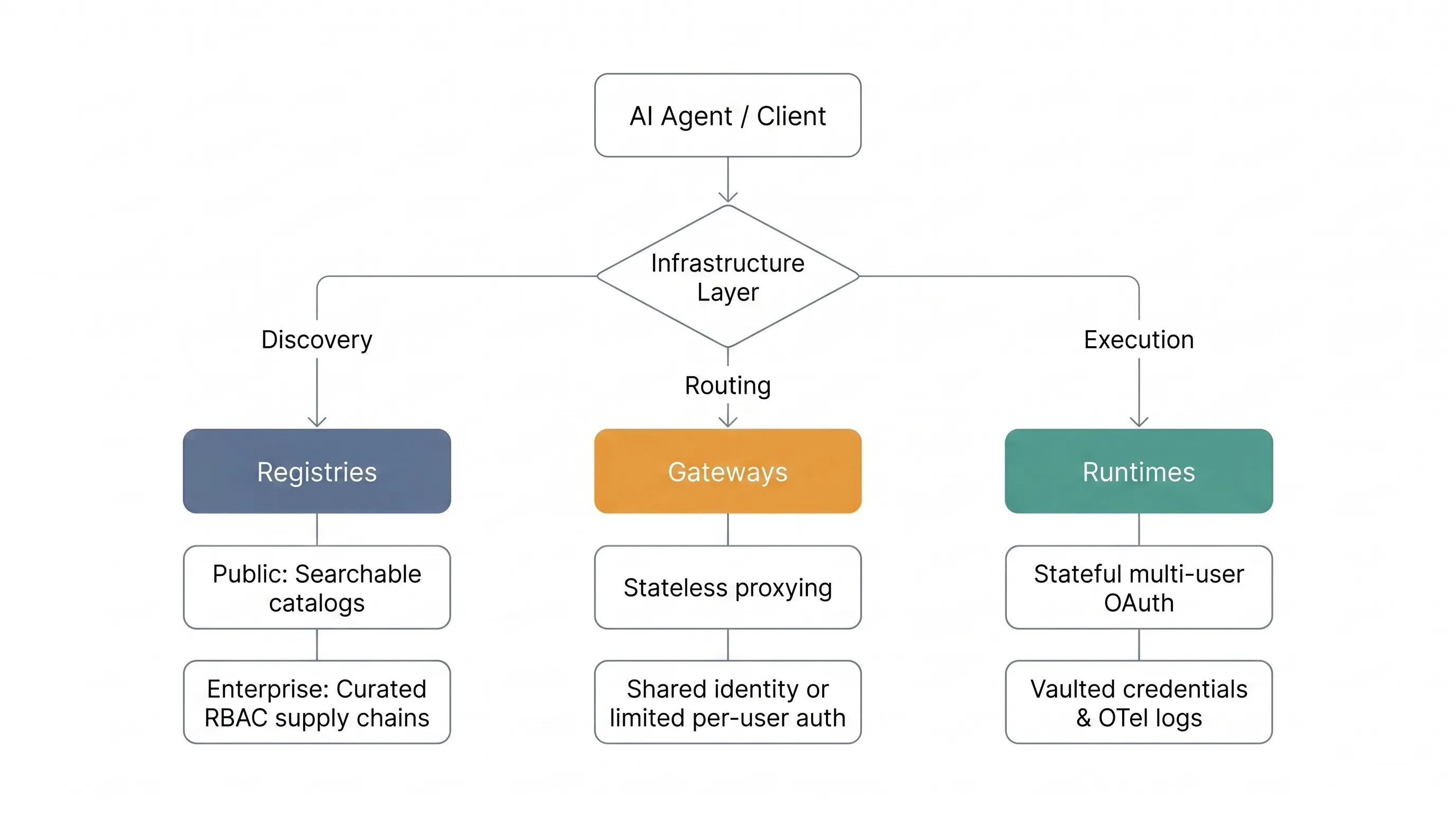

MCP taxonomy (2026): Registries, Gateways, and Runtimes

To evaluate infrastructure effectively, you need to understand the functional boundaries of the ecosystem.

MCP infrastructure taxonomy: Registries vs Gateways vs Runtimes (2026)

Category 1: MCP Registries for discovery

Registries are catalogs designed to solve tool discovery. They don’t host, authorize, or execute calls. This category splits into two types:

- Public Registries: Platforms serving as searchable public catalogs solve initial tool discovery but offer no mechanisms to govern calls securely.

- Enterprise Registries: Platforms that act as supply-chain allowlists provide curated internal catalogs, ensuring agents use only organizationally approved servers and blocking malicious public endpoints. Some enterprise registries add role-based access control and audit logs, but these capabilities vary by vendor.

When evaluating public registries, prioritize breadth of catalog and metadata accuracy. For enterprise registries, focus on security scanning, policy enforcement, and integration with existing software supply chain tools.

Category 2: MCP Gateways for routing

Traditional API gateway vendors like Kong and MuleSoft are retrofitting MCP support onto architectures designed for stateless HTTP traffic. They bridge the protocol gap by translating MCP into HTTP and applying policies using HTTP-era constructs like API keys, rate limits, and endpoint ACLs. This translation layer introduces latency, loses session context, and cannot enforce per-user, per-tool authorization. Bolting MCP onto an API gateway is not a pivot. It’s a patch.

Purpose-built MCP Gateways and integration platforms like Composio, Merge, and AWS AgentCore are a step above. They offer pre-built tool integrations and centralized routing designed for agent workflows. But they still lack the runtime-level permission intersection required for true per-user execution, leaving the hardest security problems unsolved.

When evaluating these platforms, focus on three areas:

- Spec compliance & reliability: Ensure implementations are fully spec-compliant MCP servers, not thin API wrappers with an MCP interface. Many early gateways fall into this trap, producing tools that hallucinate parameters under real-world, concurrent workloads. The official Model Context Protocol specification demands strict adherence to message structures, including capability negotiation, initialization handshakes, and lifecycle management, which simple wrappers fail to maintain. Thin wrappers that pass raw API responses unmodified can produce 100x more tokens than intent-level tools for identical queries, overflowing context windows, and degrading agent performance in multi-step workflows.

- Private infrastructure support: Assess whether the gateway can connect to private cloud environments or on-prem deployments. Support for self-hosted developer tools is often limited or requires complex networking to avoid exposing internal networks to the public internet.

- Authorization & policy: Most gateways rely on a single key or service account. They leave per-user authorization as an exercise for the buyer, creating a massive security gap in multi-tenant environments.

Category 3: MCP Runtimes for secure execution

Runtimes represent the highest tier of the taxonomy. They’re full execution platforms combining hosted tool execution, spec-compliant server implementations, multi-user just-in-time authorization (credentials are acquired only at the moment an action requires them, scoped to the specific tool and user, and never exposed to the LLM or the MCP client), vaulted credentials, policy guardrails, and complete governance into a single managed environment.

Runtimes specifically mitigate the threat of tool poisoning. By isolating credentials and enforcing user-level permissions only after the language model generates a request, runtimes ensure that a compromised agent can’t access intellectual property beyond the authenticated user’s native privileges.

The authorization model enforces a strict permission intersection: Agent Permissions ∩ User Permissions = Effective Action Scope. The agent can only execute an action if both the agent’s role policy and the human user’s native SaaS permissions explicitly allow it. Every other combination is denied.

For example, an enterprise AI agent assists the Human Resources department. An employee using this agent has administrative privileges in Workday, including access to global payroll data. But the HR agent itself is scoped strictly to recruiting tasks. When prompted to access payroll data, the runtime evaluates the intersection at call time and denies the request. The user has the authority, but the agent’s restricted scope blocks the action. This enforces least-privilege at the agent layer, blocking both data exfiltration and unauthorized access.

MCP Gateway vs Runtime authorization flow: shared identity vs per-user OBO

How to evaluate MCP Runtimes

- Per-user OAuth and RBAC: A true runtime evaluates the intersection of agent permissions and the calling user’s permissions for every single action at the function level, not just the tool level. This means enabling

process_claimandget_claim_statuswhile blockingdeny_claimfor a specific user, through a specific agent, at runtime. It handles token refresh, mismatch, and rotation according to modern IETF OAuth specifications, keeping these functions isolated from the language model context window. - Private infrastructure support: Runtimes must securely connect to self-hosted source control servers and internal developer platforms using secure tunneling or peering, without exposing them to the open web.

- Audit and policy: The platform must output OpenTelemetry-compatible logs that track every action, explicitly tied to a specific user and service. The platform should also support pre-call and post-call policy hooks to validate and intercept risky requests in real-time.

- Runtime reliability: The platform should provide a developer-defined execution context for intelligent retries, parallel execution for multi-step tasks, and automatic failover to handle rate limits and transient network errors.

Best MCP Gateways, Runtimes, and Registries (top 8)

The ecosystem currently features eight notable platforms across the defined taxonomy. This feature matrix maps each platform to the critical evaluation criteria for enterprise DevOps deployments, enabling teams to quickly identify the best architectural fit.

| Platform | Category | Per-user auth | Private infra support | Tool reliability | Audit logging | Best use case |

|---|---|---|---|---|---|---|

| Arcade | Runtime | Yes (OBO) | Yes (VPC/Secure Tunnel) | Yes (Retries, failover, parallel execution) | Yes (OTel) | Production teams needing secure multi-user agents on any system. |

| Composio | Gateway | Partial (Per-user OAuth; no agent/user permission intersection) | Yes (Self-hosted) | Limited (No field selection; errors require multiple retries) | Limited (Web UI) | Prototyping and embedding integrations into projects. |

| Merge MCP | Gateway | Partial (Per-identity auth; scope limited to integration category) | Limited | Limited (API-level retries) | Yes (API logs) | Unified API for B2B SaaS integrations (strongest in HRIS and recruiting per G2) |

| AWS AgentCore | Gateway | Partial (Outbound OAuth; OBO requires custom configuration) | Yes (AWS only) | Partial (Requires custom orchestration) | Yes (via CloudWatch, requires additional work) | Teams fully committed to the AWS Bedrock ecosystem. |

| WorkOS | Auth Sidecar | Yes (SSO/SCIM) | N/A | N/A | Yes (API) | Identity layer bolt-on for custom-built routing gateways. |

| JFrog MCP | Registry | N/A | Yes (Artifactory) | N/A | Yes (Standard) | Supply chain allowlist for approved internal enterprise tools. |

| Smithery | Registry | N/A | No | No | No (Usage stats) | Public discovery of community-built open-source tools. |

| Anthropic DIY | DIY Code | No (Build your own) | Yes (Custom build) | No (Build your own) | No (Build your own) | Open-source reference implementations and hobby projects. |

Arcade.dev (runtime)

Overview: Arcade.dev is a purpose-built execution runtime for the Model Context Protocol. Unlike stateless routing layers, Arcade operates as a full runtime that unifies agent authorization, an extensive agent-optimized tool catalog, and lifecycle governance into a single control plane. The runtime speaks MCP natively via Streamable HTTP transport with no protocol translation and no context loss.

Best for: Production teams that require secure, multi-user agents that can act on any system, from source code and CI/CD to productivity tools and business applications.

Key strengths:

Arcade differentiates itself by providing over 8,000 agent-optimized tools alongside native, multi-user just-in-time authorization. These are intent-level tools, not raw API wrappers. They absorb the ambiguity of an agent’s request and translate it into a safe, predictable transaction. When a user says, “make the intro paragraph in my Google Doc sound friendlier,” Arcade’s tools translate that into the correct segment ID, character index, and replacement text. The agent never reasons about the underlying API schema. This prevents the parameter hallucination loops that break agents wired directly to REST endpoints.

Every MCP request in Arcade carries two identity layers: a project-level key (which application is making the request) and a user-level identity (on whose behalf the action is taken). Arcade evaluates the intersection of agent and user permissions dynamically at runtime to prevent privilege escalation. Arcade hooks into existing enterprise identity systems like Okta, Entra, and SailPoint, acquiring scoped tokens at runtime and enforcing the enterprise’s existing policies rather than duplicating them.

Arcade generates structured, OpenTelemetry-compatible audit logs, providing the observability required to meet enterprise compliance requirements and trace actions back to individual user sessions. Arcade’s SOC 2 Type 2 certification validates these controls through an independent audit. Visibility filtering ensures agents only see tools the current user is permitted to invoke. Version control lets platform engineers upgrade tool schemas and rotate connection parameters without breaking running agents.

Arcade also includes its own MCP Gateway, but this is not a Category 2 routing layer. It’s a runtime-level composition layer that operates inside the runtime’s security boundary, inheriting all of its authorization, credential vaulting, and audit capabilities. It federates tools from multiple sources into team-scoped, identity-scoped collections. An enterprise can create Gateways scoped by team or security boundary: Engineering gets GitHub, Linear, and deployment tools; Sales gets Salesforce, Gong, and email tools. Each is a single URL usable in any MCP client (Cursor, Claude Desktop, VS Code, ChatGPT, or custom applications). Each Gateway can embed LLM instructions, skills, and context to help the agent use tools effectively. Teams can also bring their own existing MCP servers into the runtime to gain authorization, retries, and audit logs without rewriting what already works.

The tradeoff: Arcade is purpose-built for production multi-user deployments and handles the infrastructure complexity natively. For single-user hobbyist projects or local scripts, a full runtime adds unnecessary overhead.

Composio (gateway)

Overview: Composio is an integration platform that provides a large library of pre-built connectors between language models and downstream applications.

Best for: Developers prototyping agents or embedding third-party integrations into projects where speed matters more than security and governance.

Key strengths: Composio’s primary value is its connector library. It reduces the boilerplate code required to connect an agent to various endpoints, abstracting away initial integration complexity so developers can focus on prompt engineering.

The tradeoff: Composio’s primary value lies in its integration library, not its routing architecture. The breadth of pre-built connectors is its strength. But its default logging behavior can expose sensitive request and response payloads, creating a compliance risk for teams handling protected personal data. In published benchmarks, Composio’s raw API passthrough approach produced 100x more response tokens than intent-level tooling for identical CRM queries, consuming 373% of a 200K context window where Arcade consumed 3.7%. At scale, this token overhead translates to degraded agent accuracy, context window overflow in multi-step workflows, and significant cost differences. Full benchmark data is open source at github.com/ArcadeAI/attio-mcp-benchmark. Composio also lacks the granular, tool-level role-based access control and dedicated governance interfaces natively found in true execution runtimes.

Merge MCP (gateway)

Overview: Merge brings its legacy as a unified integration provider into the agentic space, offering a gateway that maps its existing normalized data models into protocol-compliant server endpoints.

Best for: Applications where the primary challenge is data fragmentation across B2B SaaS categories like HRIS, recruiting, and ticketing. Merge’s unified data models provide a clean, normalized interface across vendors in these categories. If your agents need to act on these systems with per-user authorization and audit trails, you need a Runtime layer that Merge does not provide.

Key strengths: Merge excels at handling unified interfaces for HR, ticketing, and accounting systems. If your agent needs to synchronize data across twenty different applicant tracking systems simultaneously, Merge provides a clean, standardized interface that prevents parameter hallucination.

The tradeoff: Merge isn’t natively built for DevOps or core engineering workflows. It lacks deep integration with specialized CI/CD tools, making it less suitable for agents designed to manipulate source code, manage cloud infrastructure, or resolve complex pull requests within isolated VPCs.

AWS AgentCore (gateway)

Overview: Amazon’s native entry into the ecosystem is a managed agent infrastructure platform with a built-in routing layer that lets developers convert existing services and serverless functions into spec-compliant endpoints securely within their cloud.

Best for: Engineering teams already deeply embedded and committed to AWS Bedrock and the wider Amazon Web Services ecosystem.

Key strengths: AgentCore provides excellent native integration with AWS CloudWatch for detailed performance metrics and uses IAM for outbound authorization to AWS-native services. AgentCore handles basic inbound authentication via Amazon Cognito and offers native infrastructure scaling.

The tradeoff: AWS AgentCore comes with extreme ecosystem lock-in. And AgentCore operates strictly as a gateway. It supports basic authorization flows, but constructing a cohesive, multi-user runtime with fine-grained governance requires stitching together Cognito, IAM, CloudWatch, and other services, each managed, configured, and monitored separately. The operational overhead compounds as agent complexity grows.

WorkOS (auth sidecar)

Overview: WorkOS isn’t a Model Context Protocol platform itself. It’s an enterprise identity and authentication sidecar that teams use to patch the security gaps in basic routing gateways.

Best for: Organizations building custom infrastructure that need to implement enterprise single sign-on and directory synchronization quickly.

Key strengths: WorkOS provides a strong infrastructure for identity management, fully supporting SCIM and automated user provisioning. WorkOS lets developers offload the complexity of user lifecycle management, session handling, and core token issuance.

The tradeoff: Because WorkOS is an authentication layer rather than an execution platform, teams must manually bolt WorkOS onto their existing gateways. This integration adds cost, latency, and architectural complexity. Developers must manually wire the identity provider’s tokens into their tool execution logic to achieve true multi-user isolation.

JFrog MCP (registry)

Overview: Moving away from routing and execution entirely, the JFrog MCP Registry is a dedicated enterprise discovery and governance catalog. JFrog treats agent tools as standard, scannable software artifacts.

Best for: Enterprise security and platform engineering teams needing to curate a strictly approved list of tools for internal developers and agent workloads.

Key strengths: JFrog acts as a critical supply chain allowlist. By providing strict role-based access controls and detailed audit logs at the perimeter, JFrog enables enterprises to create a highly curated internal catalog. This approach blocks malicious or unverified public endpoints from entering the corporate network.

The tradeoff: JFrog is strictly a discovery and policy layer. It doesn’t provide an execution environment or live routing layer. After discovering and approving a tool through the registry, teams still need a dedicated gateway or runtime to invoke and execute the tool securely against real data.

Smithery (registry)

Overview: Smithery is currently the canonical public discovery engine for the ecosystem, functioning as the primary open marketplace for finding and distributing community-built servers.

Best for: Solo developers, researchers, and hobbyists looking to quickly find, experiment with, and test community-built servers on local machines.

Key strengths: Smithery provides broad visibility into the leading edge of the ecosystem. If a new, niche API is released, a community-built connector for it will likely appear on Smithery within days. It’s ideal for rapid, low-friction prototyping.

The tradeoff: Smithery is designed purely for discovery and basic local execution. It lacks the enterprise governance mechanisms, strong access controls, and strict security vetting processes required by corporate security teams. Smithery can’t solve execution, routing, or secure infrastructure challenges, making it unsafe to wire directly into production engineering workflows handling sensitive data.

Anthropic official servers and DIY Python SDK (DIY)

Overview: For teams opting to build from scratch, Anthropic provides open-source reference implementations and SDKs for platforms like GitHub and Slack to demonstrate core capabilities.

Best for: Highly specialized, deeply custom architectures where pre-built commercial platforms can’t accommodate proprietary, legacy internal protocols.

Key strengths: Building your own infrastructure provides complete control over every byte of data and every network request. Custom builds serve as a strong educational foundation for understanding exactly how the protocol handles capability negotiation and context exchange.

The tradeoff: The engineering burden is immense. You’re responsible for building, maintaining, and scaling your own multi-user authorization pipelines, routing rules, network security boundaries, and audit plumbing from scratch. You’re also locked into Anthropic’s model ecosystem since the reference servers are built for Claude. This approach results in high maintenance costs and pulls engineering focus away from building product features.

5-step runbook for deploying MCP agents in production

Enterprise AI deployments handling sensitive code, customer data, or regulated systems follow the same five-step path, regardless of team.

- Map the blast radius: Identify your target systems and classify the sensitivity of the data and actions the agent will access before writing any code.

- Choose your architecture: Select the appropriate platform category, Registry, Gateway, or Runtime, based entirely on the security and governance baselines you established in the first step.

- Configure identity mapping: Never use shared keys for sensitive data. Tie all agent actions back to real, verifiable human identities from your primary identity provider using modern authorization flows.

- Implement visibility filtering: Enforce strict catalog access. Ensure agents can only dynamically discover and invoke the specific tools that the authenticated user is explicitly permitted to use.

- Test failover and retries: Validate how your chosen platform handles transient errors, rate limits, and tool failures. Rely on structured retry logic and exponential backoff, rather than depending on unbounded LLM-driven retries.

Conclusion

Infrastructure choice depends on data sensitivity, not a universal tradeoff. Use registries for public tool discovery and gateways for routing non-critical productivity tools. For enterprise AI deployments handling sensitive code, regulated data, or multi-user workflows, execution runtimes are the only viable option.

Don’t grant an AI agent unbounded service-account access to your development platforms. Prompt injection is a persistent threat, and over-permissioned agents are a vulnerability that attackers will exploit.

If you’re building multi-user agents and can’t afford to leak source code or compromise your engineering environment, explore Arcade. Arcade provides per-user authorization, agent-optimized tools, and lifecycle governance unified within a single runtime layer.

Want to give your agents secure, per-user access to enterprise tools without building auth infrastructure from scratch?

Sign up for Arcade now.

FAQ: MCP Gateways, Runtimes, and Security

What’s the difference between an MCP Registry, Gateway, and Runtime?

A registry handles tool discovery and cataloging, a gateway routes requests (often with a shared service account), and a runtime executes tools securely with per-user authorization, vaulted secrets, and audit logs.

When should I use an MCP Gateway vs an MCP Runtime?

Use a gateway for low-risk tools and fast routing with a shared identity. Use a runtime when you need per-user OAuth/OBO, RBAC, and OpenTelemetry audit logs for production agents.

Do MCP Gateways support per-user authorization (OAuth/OBO)?

Most gateways default to a shared service account and don’t provide true per-user OBO execution. If you need per-user permissions enforced at execution time, you typically need a runtime or significant custom auth middleware.

How do I prevent prompt injection from exfiltrating source code via tools?

Enforce least privilege per user, keep credentials out of the model context, and apply policy checks before and after tool calls. Runtimes reduce the blast radius by executing tool calls on behalf of the authenticated user’s limited permissions.

Can I run MCP securely in a VPC or with self-hosted tools like GitHub Enterprise?

Yes, but you need private connectivity (e.g., tunneling or peering) so internal services aren’t exposed publicly. Prefer platforms that explicitly support private infrastructure and enterprise networking patterns.

What audit logs do I need for enterprise AI agents?

You need logs that record which agent did what on behalf of whom, to which system, and when, tied to a real user identity. OpenTelemetry-compatible logs are a common requirement for traceability and incident response.

Where should secrets live in an MCP setup?

Secrets should be stored in a vault and injected only at execution time. Never place them in prompts or the model context window. The agent should receive the results of an action, not the raw credentials used to perform the action. Runtimes provide this credential isolation natively.

Is an “MCP Gateway” just an API gateway?

Not exactly. MCP interactions are often multi-turn and stateful, while traditional API gateways are optimized for stateless request-response cycles. If you use an API gateway, you’ll usually need additional MCP-aware middleware.

Can I use an existing API gateway for MCP?

Not directly. Traditional API gateways are built for stateless requests, whereas MCP is stateful and multi-turn. You’d need to build significant custom middleware to handle MCP’s requirements for capability negotiation and context exchange.

How do I handle SOC 2 compliance with AI agents?

Two things matter separately. First, your vendor should hold SOC 2 Type 2 certification as an enterprise procurement requirement that validates their internal controls. Second, the product itself must generate structured audit logs that record who did what, to which system, and when, tied to an authenticated user identity. The certification alone does not guarantee the per-action visibility and governance you need. Look for OpenTelemetry-compatible logs with per-user traceability as a product capability, not just a compliance claim.

Does this apply beyond engineering and DevOps teams?

Yes. The same architecture applies to any enterprise AI deployment handling sensitive data. Human Resources agents accessing Workday, finance agents accessing accounting systems, and legal agents accessing contract repositories all require per-user authorization, vaulted credentials, and audit logs. The engineering examples in this guide generalize directly to any department where agents act on behalf of authenticated users.